Shilong Liu Homepage

Hi! This is Shilong Liu (刘世隆). I am a Research Scientist at Bytedance Seed.

I obtained my PhD from the Department of Computer Science and Technology, Tsinghua University, under the supervision of Prof. Lei Zhang, Prof. Hang Su, and Prof. Jun Zhu. I got my bachelor’s degree from the Department of Industrial Engineering, Tsinghua University in 2020.

During most of my PhD years, I had the opportunity to intern at the International Digital Economy Academy (IDEA), under the supervision of Prof. Lei Zhang. I was an intern at Bytedance, NVIDIA, Shengshu-tech, and Microsoft Research, where I was fortunate to collaborate with talented researchers including Guilin Liu, Zhiding Yu, Chunyuan Li, Hao Cheng, and Jianwei Yang.

My previous work focused on computer vision, multi-modal learning, and LLM agents. Here are some of my representative works:

-

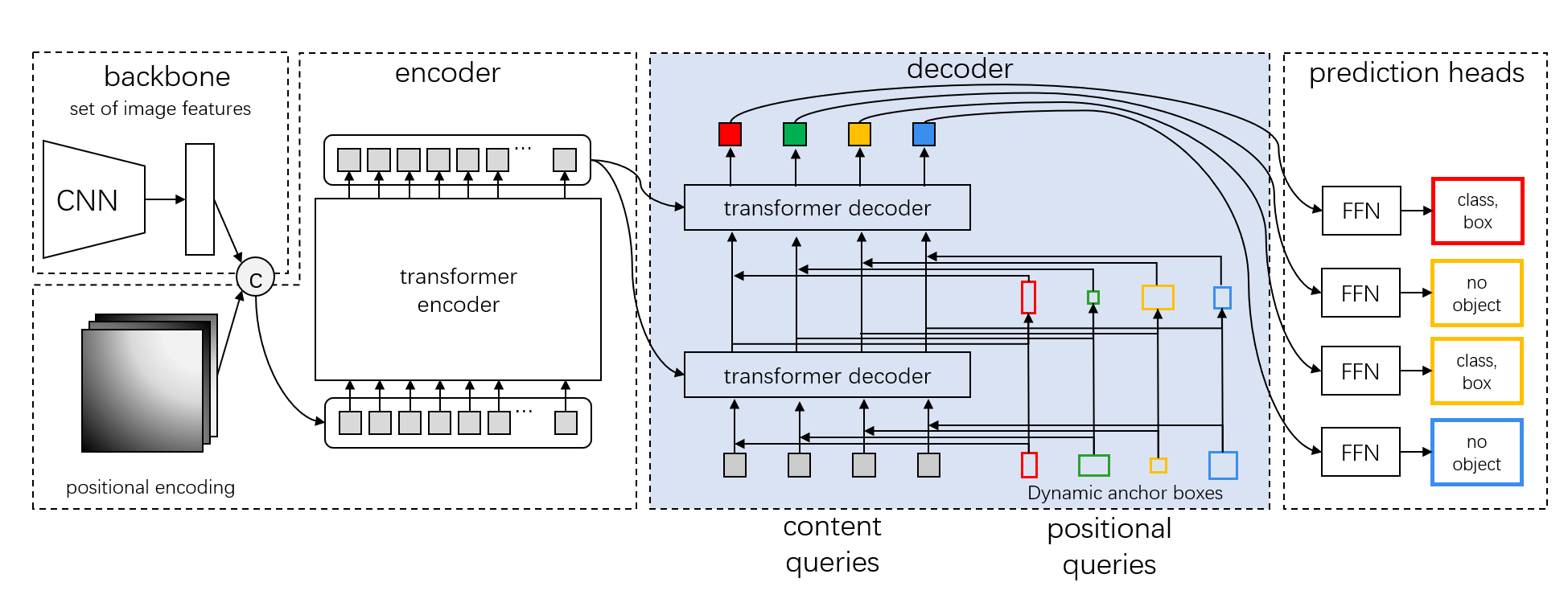

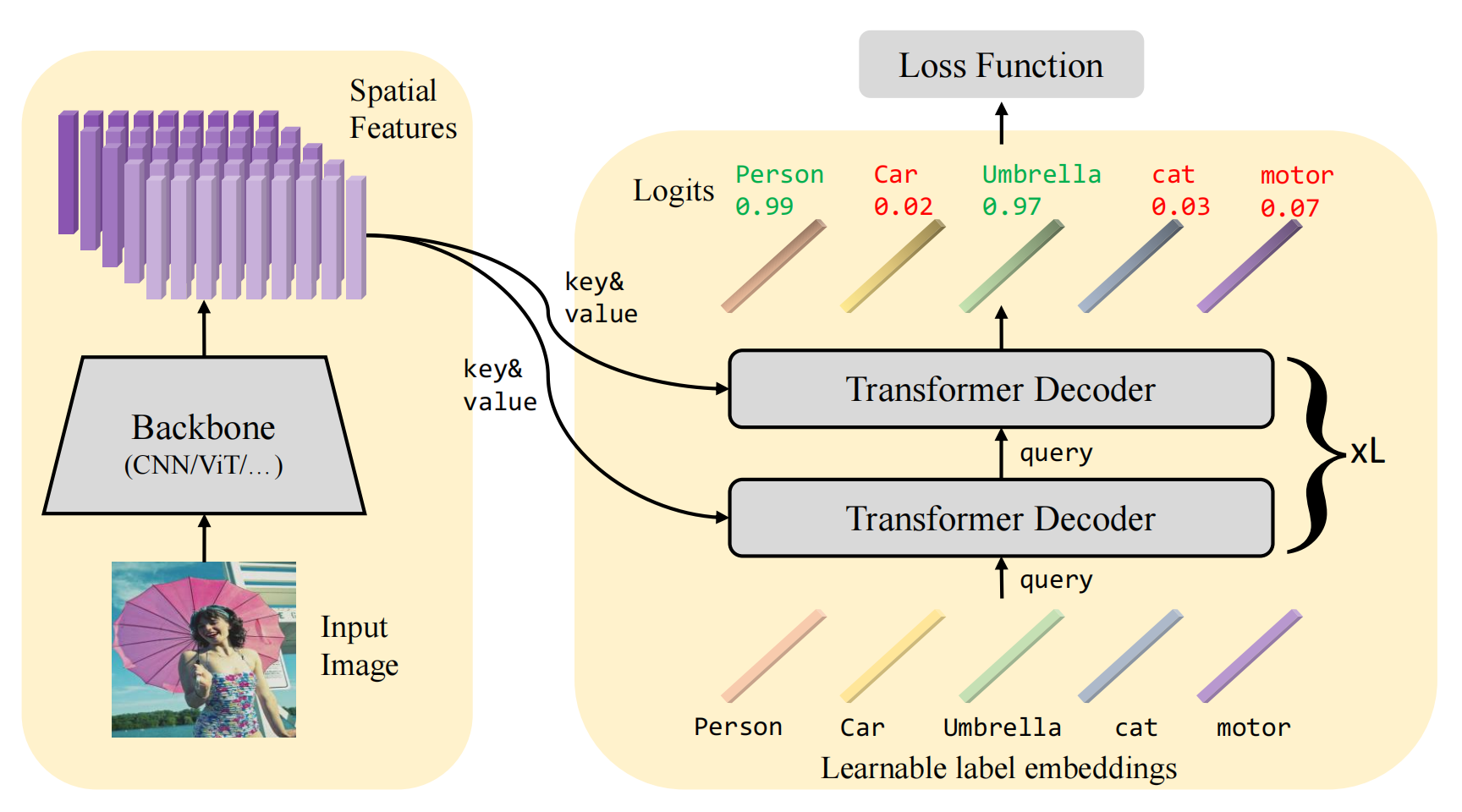

Visual Perception: We proposed a series of improvements on Detection Transformer including DAB-DETR

, DN-DETR

, DINO

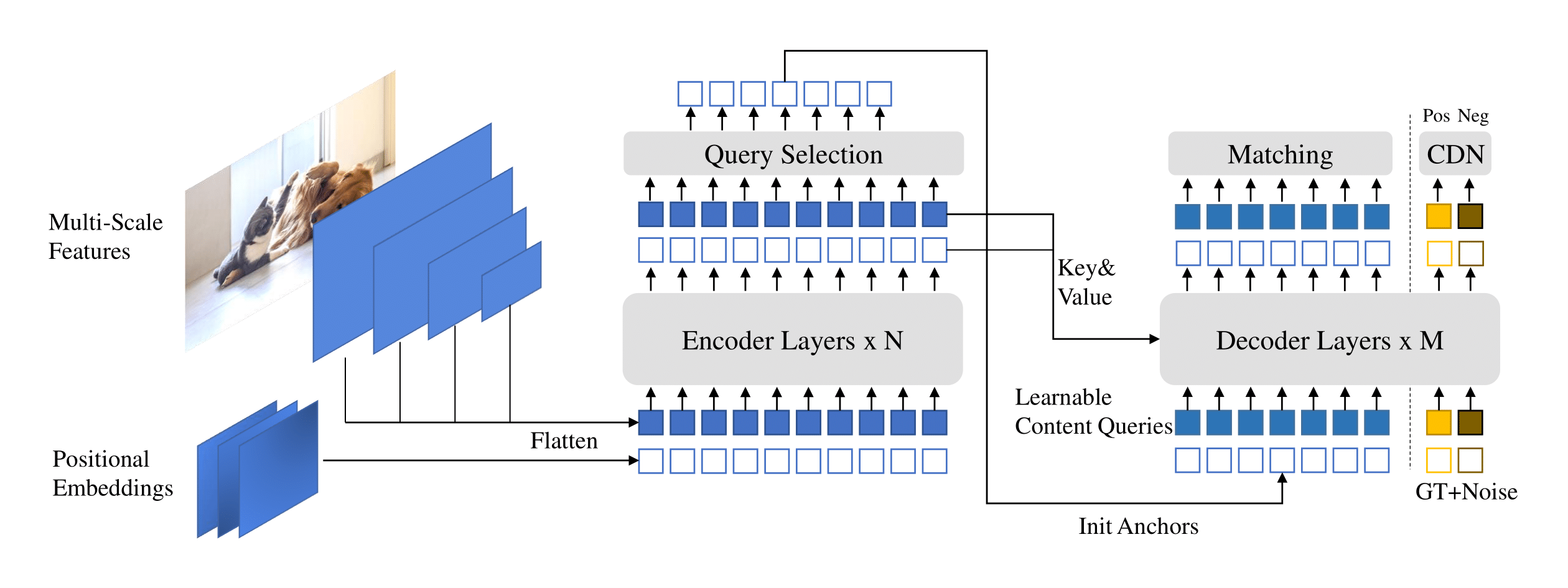

, MaskDINO

, and Stable-DINO

. Notably, DINO was the FIRST DETR-like model to achieve state-of-the-art performance on the COCO object detection leaderboard.

-

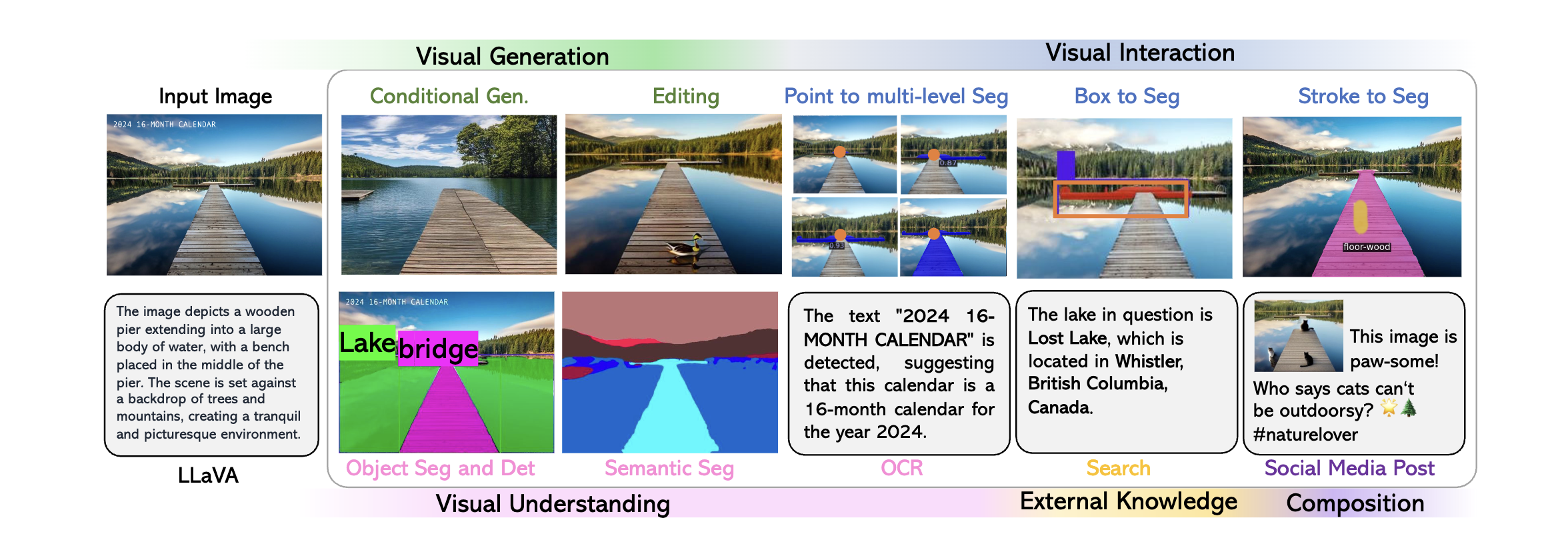

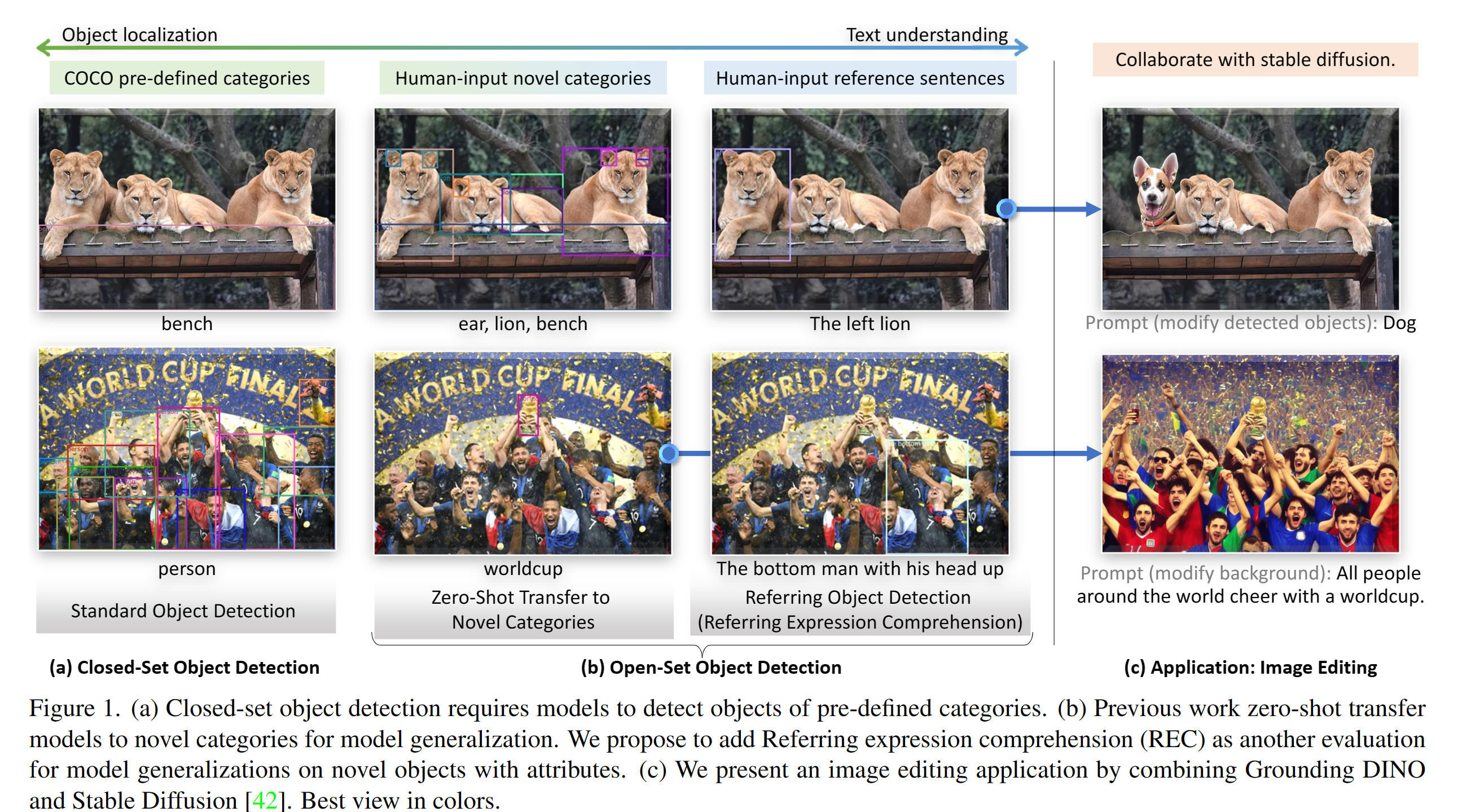

Open-world Visual Understanding/Multi-model learning: Our innovative models Grounding DINO

, Grounded-SAM

, and Grounding-DINO-1.5/1.6/DINO-X have made significant strides in this field. Grounding DINO has become one of the most popular open-world object detection models, enabling us to DETECT and SEGMENT ANYTHING.

-

LLM Agents: We proposed Alita, a generalist agent that ranked 1st in GAIA benchmarks, outperforming OpenAI Deep Research. We introduced LLaVA-Plus, which enhances multi-modal large language models with visual expertise, and Crab, a Python-based framework for building and benchmarking LLM agent environments. We also extended agents to specific fields like medical and history.

If you’re interested in related topics and would like to collaborate, feel free to reach out with my email: slongliu86 [AT] gmail.com. (Note: The email liusl20 [AT] mails.tsinghua.edu.cn will be deprecated; please use the Gmail address instead.)

Feel free to add me with my WeChat: SLONG_88 (please include a brief note about yourself when sending a request).

| Google Scholar | GitHub | Zhihu 知乎 | CV |

News

| Nov 11, 2024 | Invited talk at EECS 542, University of Michigan. [Slides] |

|---|---|

| Jul 22, 2024 | Start my internship at NVIDIA, collabrating with Guilin Liu and Zhiding Yu. See you at the Bay Area, USA. |

| Jul 4, 2024 | I was awarded as the 2024 WAIC Yunfan Award – Rising Star. [News] |

| Jul 3, 2024 | 6 papers are accepted by ECCV 2024. See their details: |

| May 17, 2024 | We introduce Grounding DINO 1.5, which is our most powerful open-world object detection model series. View our blog and tech report for more details. Try our demo. |

| Feb 19, 2024 | Invited talk at Rising Stars in AI Symposium 2024 at KAUST. I really enjoy the trip. |

| Dec 1, 2023 | DINO and DAB-DETR are awarded as the most influential papers for ICLR 2023 and ICLR 2022, respectively. Mask DINO is selected as one of the most influential paper for CVPR 2023. |

| Nov 5, 2023 | I was awarded as the CCF-CV Academic Emerging Scholar 2023 (CCF-CV 学术新锐学者, 3 people per year)! Thanks to the China Computer Federation. |

| Sep 29, 2023 | Invited talks at Institute for Al Industry Research (AIR), Tsinghua University, HPC-AI Lab National University of Singapore, Gaoling School of Artificial Intelligence at Renmin University of China (RUC), and VALSE Student Webinar. View the slides here (Slides about detection, grounding, and large language models) |

| Mar 13, 2023 | We release a strong open-set object detection model Grounding DINO that achieves the best results on open-set object detection tasks. It achieves 52.5 zero-shot AP on COCO detection, without any COCO training data! It achieves 63.0 AP on COCO after fine-tuning. Code and checkpoints will be available here. |

| Sep 22, 2022 | We release a toolbox detrex that provides state-of-the-art Transformer-based detection algorithms. It includes DINO with better performance. Welcome to use it! |